When teams evaluate infrastructure for AI fine-tuning, they often focus on model size, GPU resources, and monthly cost. Those factors matter, but they are not the only ones that shape real development workflows. For many teams in Asia-Pacific, infrastructure location, remote accessibility, operational habits, and long-term manageability can be just as important. Fine-tuning is not only a compute task. It is an iterative process that includes dataset preparation, debugging, testing, collaboration, and repeated validation across multiple stakeholders.

That is why a Japan-based VPS can be a practical option for certain AI projects. It may offer a better regional base for APAC teams, especially when the workload involves internal tools, proof-of-concept development, domain-specific adaptation, or moderate-scale experimentation.

This article explains what AI teams actually need for fine-tuning, how to think about Windows and Linux environments, what to check before choosing a VPS, and when a Japan-based setup is a better fit than a larger cloud GPU platform.

AI Fine-Tuning in APAC: Why Infrastructure Location Still Matters

When teams evaluate AI infrastructure, they often focus on model size, GPU availability, and monthly cost. Those factors matter, but infrastructure location still plays an important role, especially for development teams working across Asia-Pacific (APAC). Fine-tuning is not just a compute task. It is an iterative workflow that includes dataset preparation, remote access, debugging, model evaluation, internal collaboration, and repeated testing.

For APAC development teams, infrastructure location can directly affect the speed and efficiency of fine-tuning workflows.

Latency, Collaboration, and Access Across APAC Teams

In a real development workflow, engineers do more than launch training jobs and wait for results. They connect to remote environments, move datasets, check logs, adjust scripts, test inference behavior, and share results across teams. If the environment is hosted too far from the team, even routine tasks can become slower and less efficient. Over time, that can reduce overall productivity.

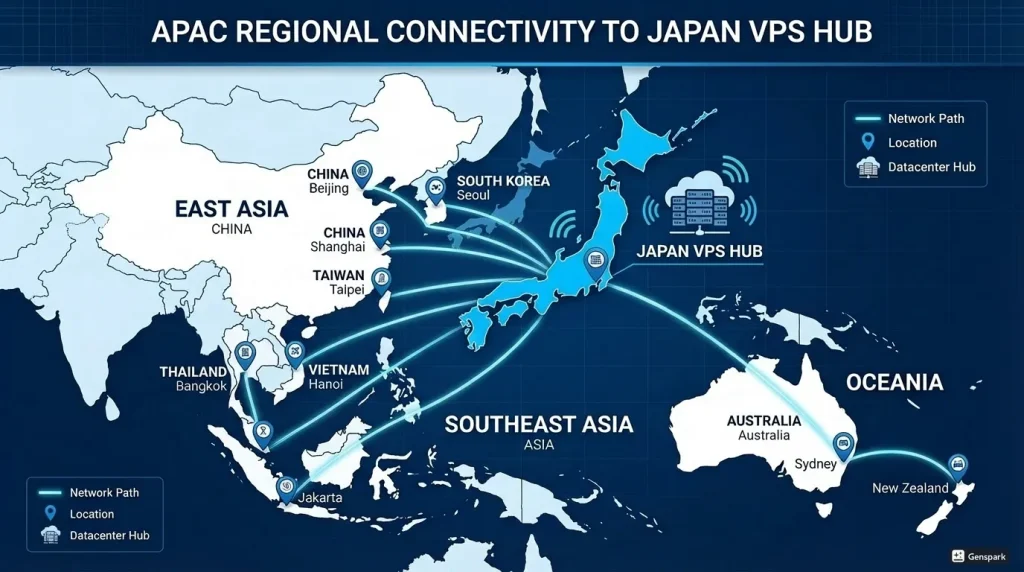

For teams spread across East Asia, Southeast Asia, and Oceania, a Japan-based environment can serve as a practical regional hub. It may be easier to access than infrastructure hosted in a more distant region, and it can improve day-to-day responsiveness for remote administration, file transfers, monitoring, and review cycles. That matters when a project depends on constant iteration rather than a single large training run.

Location can also affect collaboration between technical and non-technical stakeholders. A product manager reviewing outputs, a QA team member testing a chatbot, or a business team validating domain-specific responses may all need access to a staging environment. A regionally closer server can make those testing cycles more efficient.

Data Handling and Regional Deployment Considerations

Location is not only about speed. It can also influence how teams think about data handling, internal governance, and deployment planning. Some organizations in APAC prefer to keep development infrastructure closer to where teams operate or where a service will eventually be deployed. Even when there is no strict compliance requirement, proximity can simplify internal discussions around architecture, operational visibility, and network design.

For example, a company building an internal assistant for Japanese-language documents, customer support content, or operational manuals may prefer to fine-tune and test in an environment that is geographically close to its users and internal systems. A Japan-based VPS can make the overall architecture better aligned with the intended use case.

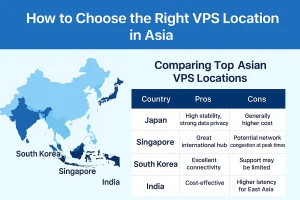

This does not mean Japan is always the best option for every APAC project. However, it does mean that infrastructure location should be treated as a meaningful design decision rather than an afterthought.

Why Japan Can Be a Practical Base for AI Development in Asia-Pacific

Japan is often considered a strong infrastructure location because of its connectivity, operational reliability, and strategic position in the region. For AI teams, those characteristics matter when deciding where development, testing, and internal deployment should take place.

For many APAC teams, Japan can be a practical location for AI development, testing, and internal deployment.

A Central and Reliable Location for Regional Teams

From an APAC perspective, Japan can serve as a practical regional base for teams that need stable access across multiple markets. It is especially relevant when a project involves Japanese-language data, Japan-focused services, or engineering collaboration across nearby countries and territories. Even outside Japan-specific projects, a server in Japan can be a reasonable operational center for teams working across the broader region.

That makes a Japan-based VPS attractive not only for performance reasons, but also for operational and organizational reasons. Teams may prefer a location that is operationally closer to their actual business environment rather than relying entirely on a distant region chosen only because it is common in global cloud examples.

Operational Stability for Testing, Iteration, and Internal Tools

Not every AI project begins as a large customer-facing platform. Many start as internal tools, pilot programs, or controlled experiments. In these stages, operational stability is often more valuable than maximum scale. Teams need an environment where they can prepare datasets, run training jobs, test inference behavior, and share access safely with internal stakeholders.

A VPS can support that need effectively. It gives teams more direct control over the environment and can be easier to manage than a rapidly expanding set of managed services. For example, a small development team may prefer to operate a defined server environment for a document assistant or classification model rather than assemble a more fragmented architecture too early in the project.

Japan-based hosting can be especially practical when the eventual production path may also involve Japan-based systems, users, or integrations. Development decisions often become easier when the testing environment already reflects the geographic context of the intended service.

When a Japan-Based Environment Makes More Sense Than a Distant Region

There are many situations where a large public cloud region outside APAC is technically possible but not operationally ideal. If your developers are in Asia, your users are in Asia, and your data flows are primarily in Asia, a distant region may introduce unnecessary friction. That friction may appear in daily remote access, team coordination, staging validation, or data movement.

A Japan-based VPS can offer a practical middle ground. It is close enough to support regional workflows effectively, while still remaining simple enough for controlled experiments, internal tools, and moderate-scale model adaptation. For teams that want a practical starting point instead of an immediately complex cloud architecture, this can be a strong fit.

What AI Teams Actually Need for Fine-Tuning Workloads

Many teams overestimate the infrastructure required to start fine-tuning. That is understandable because discussions about AI infrastructure often focus on the largest and most expensive examples. In practice, many business use cases rely on smaller, more targeted adaptation workflows. Before choosing infrastructure, it helps to separate fine-tuning from full model training.

For many teams, the key question is not how to build the largest environment, but how to choose infrastructure that fits practical fine-tuning workloads.

Fine-Tuning vs. Full Model Training

Training a model from scratch is very different from fine-tuning an existing model. Full model training requires massive datasets, long training cycles, and substantial compute resources. Fine-tuning, by contrast, starts with a pre-trained model and adapts it to a narrower purpose. That purpose may include domain terminology, task-specific behavior, output style, multilingual handling, or company-specific knowledge patterns.

For many APAC teams, the real goal is not to build a foundation model from zero. It is to make an existing model more useful for a specific business task. That changes the infrastructure requirement significantly. Teams may be able to work with a smaller and more manageable environment, especially during proof-of-concept and early validation stages.

GPU Memory, Storage, and Remote Access Requirements

Even lightweight fine-tuning still requires careful resource planning. GPU memory is often one of the most important constraints. If the model, training method, quantization strategy, and batch size do not fit within the available VRAM, the workflow can become slow, unstable, or impractical. Teams should also consider system memory, storage performance, and dataset size.

Storage often receives less attention than it deserves. Training data, intermediate checkpoints, logs, embeddings, and evaluation outputs can add up quickly. Slow storage can affect data preparation and experiment turnover. A fine-tuning environment should support consistent read and write performance, especially when developers repeatedly revise datasets and rerun experiments.

Remote access also matters more than many teams expect. Some workflows are fully command-line based, but others involve mixed users and mixed tools. Data scientists may use notebooks and Python environments, while infrastructure teams may prefer standardized remote access methods. A VPS can be attractive when a team wants a controlled environment that can be accessed and maintained without the complexity of a large cloud stack.

Why LoRA and Lightweight Adaptation Change the Infrastructure Equation

Modern fine-tuning approaches such as LoRA and other parameter-efficient methods have made AI customization more accessible. Instead of updating every parameter in a model, teams can adapt a smaller subset or use more efficient training strategies. This reduces compute requirements and makes it possible to run useful experiments without treating every project like a hyperscale infrastructure problem.

That is one reason a Japan-based VPS can be a practical option for many AI teams. If the goal is to adapt a model for internal search, customer support assistance, document classification, or domain-specific generation, the required environment may be far smaller than what is needed for large multi-GPU distributed training. A well-chosen VPS can be enough to validate ideas, build prototypes, and support ongoing refinement.

Windows VPS or Linux Environment: Which Setup Is the Better Fit?

When planning AI infrastructure, teams often ask whether they should use Windows or Linux. There is no universal answer. The better choice depends on the team’s workflows, administration habits, tooling requirements, and the people who will operate the environment after the prototype stage.

For many teams, the decision is less about technical ideology and more about operational fit.

When Windows VPS Is Easier for Remote Administration

A Windows VPS can be attractive when an organization already relies on Windows-based operational practices. Remote Desktop access is familiar to many IT teams, and a graphical environment can reduce friction for stakeholders who are not deeply comfortable with command-line workflows. This can be especially useful in early project stages when multiple roles need access to and visibility into the same environment.

For teams working on internal AI pilots, a Windows-based setup may simplify access management, handoff, and routine administration. It can be particularly helpful when the project is shared between developers and infrastructure or support teams that already manage Windows systems.

When Linux-Based Workflows Are a Better Fit for ML Tooling

At the same time, many machine learning frameworks, reference examples, and deployment patterns are more closely associated with Linux environments. Python-based tooling, container workflows, GPU drivers, and model-serving stacks are often documented with Linux-first assumptions. For teams with established ML engineering practices, Linux may feel more natural and more flexible.

If your developers are already comfortable with shell-based workflows, virtual environments, package management, and Linux-based ML orchestration, Linux may be the more efficient option for fine-tuning.

Choosing Based on Your Team’s Operational Habits

The most practical decision is often the one that matches how your team already works. If your infrastructure staff prefers Windows-based remote management and your AI workload is moderate, a Windows VPS may provide the most accessible path. If your ML engineers already operate Linux pipelines and expect that environment, Linux is likely the better fit.

The important point is to choose an environment that supports both model work and operational sustainability. Even a technically capable setup becomes less valuable if it creates constant friction for the people who maintain it.

What to Check Before Choosing a VPS for AI Fine-Tuning

Before selecting a VPS, it helps to evaluate the workload as clearly as possible. Not every AI project fits the same server profile or resource plan, and not every fine-tuning task should be treated as a general-purpose hosting problem.

GPU Availability, Scalability, and Workload Scope

Start by defining the actual workload.

- What model family are you using?

- How large is the model?

- Are you performing lightweight adaptation, instruction tuning, or a more intensive process?

- How often will the environment be used, and by how many people?

These questions help determine whether a VPS is enough or whether a larger GPU platform is required.

You should also think about future scalability. A VPS may be ideal for a proof of concept, but your team may later need more GPU capacity, different storage design, or a more automated training pipeline. Planning for that possibility early can prevent painful migration later.

Storage Performance, Backup, and Dataset Handling

Do not choose an environment based only on GPU-related specifications. Dataset handling, snapshot strategy, backups, and storage expansion all matter. Fine-tuning workflows often generate multiple iterations of data, scripts, checkpoints, and evaluation files. A practical environment should support organized storage, backup, and recovery planning.

If the project touches business data, internal documents, or sensitive operational content, backup and access design should be discussed from the beginning. A reliable environment is not only fast when everything works. It is also manageable when something goes wrong.

Security, Access Control, and Long-Term Operating Costs

Security should be considered early, especially for internal AI projects. Teams need to define who can access the environment, how credentials are handled, and how remote access is monitored. A centralized VPS can improve control compared with scattered local experimentation, but only if access policies are designed carefully.

Long-term operating cost is another key factor. A team may focus on monthly server pricing at first, but the real cost also includes time spent on setup, maintenance, debugging, handoff, and migration. Sometimes a smaller and simpler environment delivers better value because it allows the team to move faster with fewer operational complications.

When a Japan-Based VPS Is the Right Choice—and When It Isn’t

A Japan-based VPS is not the right choice for every AI project. It is important to define where it fits well and where another option may be more suitable.

Best-Fit Scenarios for VPS-Based Fine-Tuning Projects

This approach is often a strong fit when your team is running a proof of concept, adapting an existing model, building an internal assistant, testing a domain-specific chatbot, or creating a shared remote workspace for AI development. It is also a good match when your users, developers, or target systems are in Asia-Pacific and you want a practical regional hosting base.

In those scenarios, a Japan-based VPS can offer a balanced combination of regional proximity, infrastructure control, and operational simplicity. It gives teams room to experiment and refine without immediately committing to a more complex architecture than they actually need.

Cases Where Larger Cloud GPU Platforms May Be Better

There are also cases where a VPS may not be the best tool. If you need large-scale distributed training, many GPUs working together, highly elastic scheduling, or extremely short turnaround for compute-heavy jobs, a larger cloud GPU platform may be more suitable. The same applies when your architecture already depends heavily on managed cloud services and integrated automation across a broad platform.

Choosing a VPS should not be about forcing every workload into the same infrastructure model. It should be about choosing the right level of infrastructure for the stage and scope of your project.

For many AI teams in Asia-Pacific, that right level is more modest and more practical than people first assume. If your goal is effective fine-tuning, controlled experimentation, and a reliable regional base for development, a Japan-based VPS can be a practical place to start.

Conclusion

AI fine-tuning projects succeed when the infrastructure matches the actual workload and the way the team works. In Asia-Pacific, a Japan-based VPS can be a practical choice because it combines regional relevance, operational stability, and manageable operations. It supports teams that want to adapt models for real business use cases without overcomplicating the environment too early.

That does not mean a VPS replaces every cloud GPU strategy. It means that for many internal AI tools, proof-of-concept projects, and targeted fine-tuning workflows, a Japan-based VPS offers a practical foundation. If your team values proximity, control, and a smoother path from experimentation to a usable service, it is an option worth serious consideration.

FAQ

Q1. Is a Japan-based VPS suitable for every AI fine-tuning project?

A1. No. A Japan-based VPS is often a practical option for proof-of-concept projects, internal AI tools, lightweight model adaptation, and regional development workflows in Asia-Pacific. However, large-scale distributed training or highly elastic GPU workloads may be better suited to a larger cloud GPU platform.

Q2. Why does server location matter for AI fine-tuning in Asia-Pacific?

A2. Server location can affect day-to-day development efficiency. Fine-tuning involves remote access, dataset transfers, monitoring, debugging, testing, and collaboration. For APAC teams, a Japan-based environment can provide a more practical regional base for these iterative workflows.

Q3. How should teams choose between a Windows VPS and a Linux environment for AI workloads?

A3. The decision should depend on the team’s operational habits and tooling requirements. A Windows VPS may be easier for teams that rely on Remote Desktop and Windows-based administration, while Linux may be a better fit for teams already using Linux-based ML workflows, containers, and command-line tooling.

Explore Japan VPS Plans for AI Development

If your team is evaluating a practical environment for AI fine-tuning, internal tools, or regional development in Asia-Pacific, reviewing Japan VPS plans can help you compare options for performance, accessibility, and operational fit.